Top news of the week: 05.04.2023.

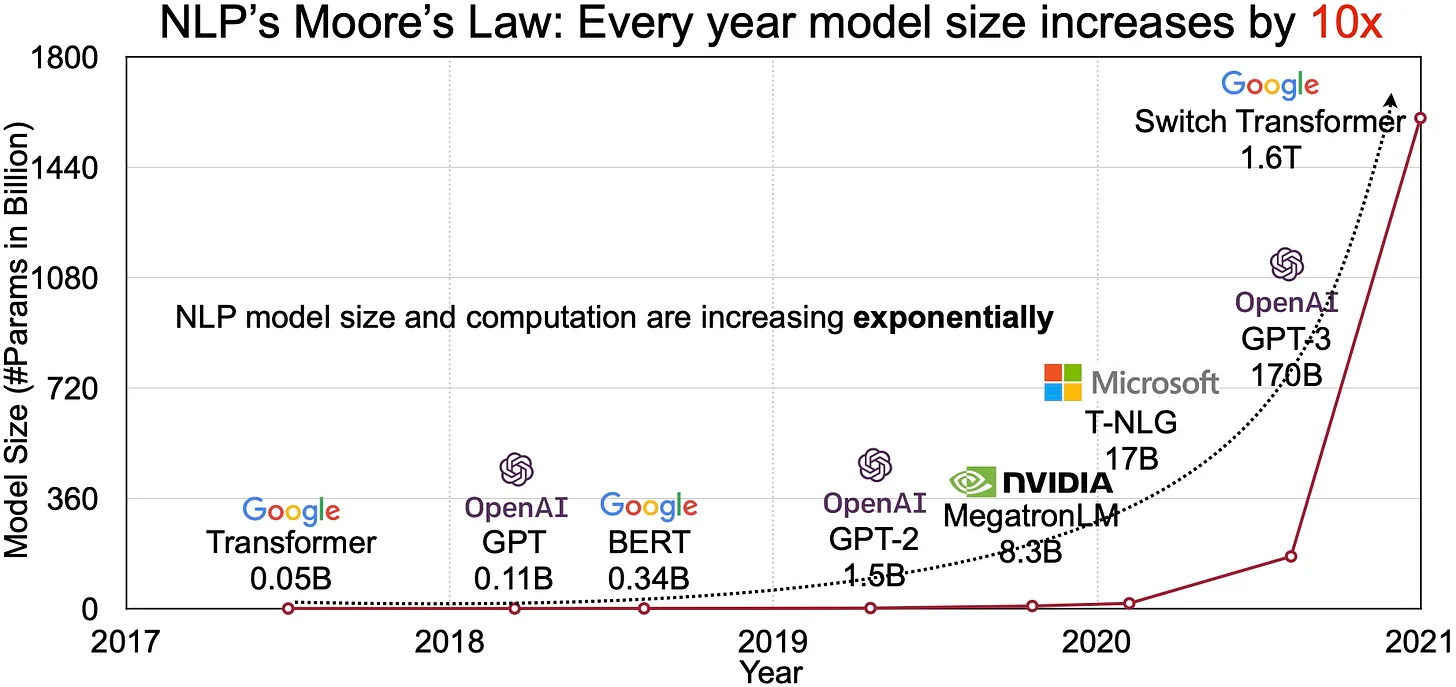

Scaling vision transformers to 22 billion parameters

To enable this scaling, ViT-22B incorporates ideas from scaling text models like PaLM, with improvements to both training stability (using QK normalization) and training …

Research Brief: Breakthrough Enables Perfectly Secure Secret Communications

A team from CMU and Oxford University have developed an algorithm that conceals sensitive information so effectively that it is impossible to detect, like the map image hidden in the cat ...

Soumith Chintala: PyTorch

On the past and present of machine learning frameworks, and the story of PyTorch and its creator.

Twitter's Recommendation Algorithm

Twitter Apache Thrift is an open-source, standalone, lightweight, data encoding library. In this blog post, we share the library we built so iOS developers outside Twitter can start using ...

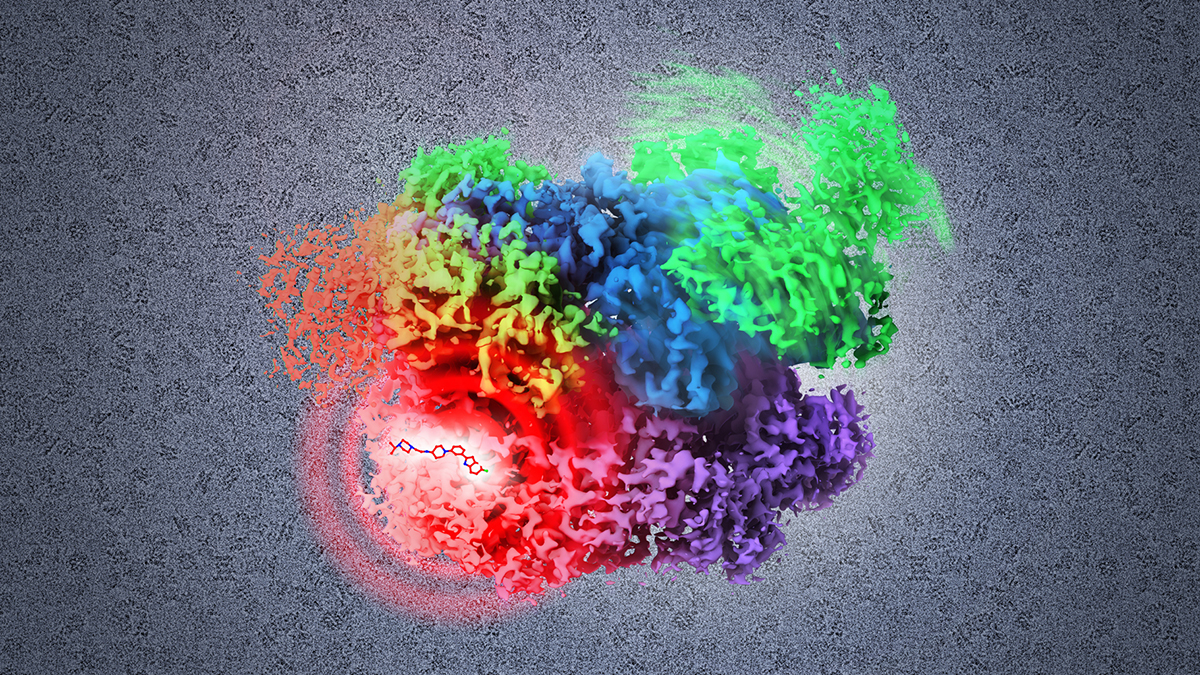

Researchers unveil BioTranslator, a machine learning model that bridges biological data and text to accelerate biomedical discovery

Biomedical research has yielded troves of data on protein function, cell types, gene expression and drug formulas that hold tremendous promise for assisting scientists in responding to ...

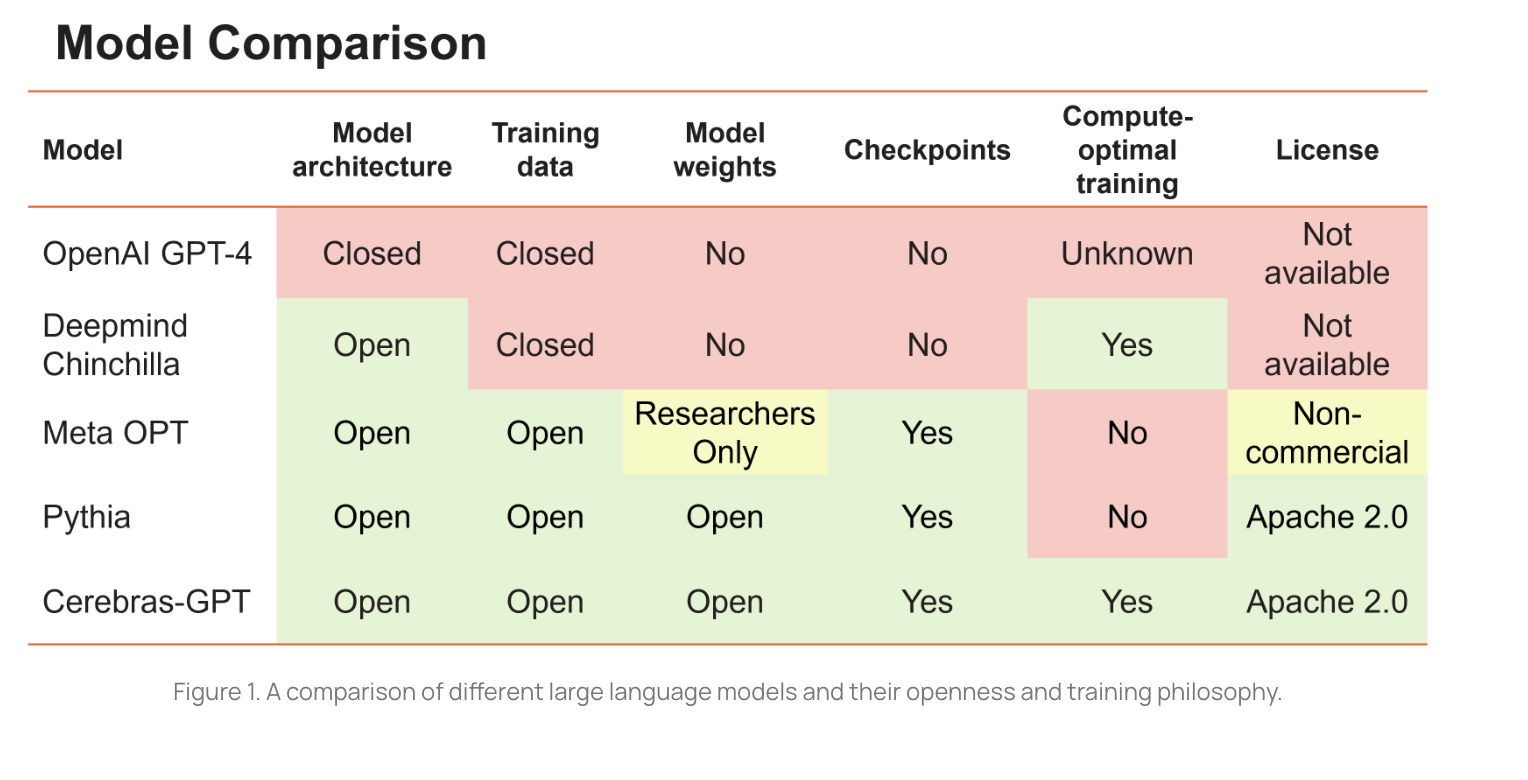

Towards Reinforcement Learning with AI Feedback (RLAIF). What open-sourced foundation models, instruction tuning, and other recent events mean for the future of AI

A few weeks back I shared my thoughts on how things were going to evolve in the race to build better/larger/smarter generative AI models, and particularly LLMs. Here is what I had to say:

Chris Potts: AI language models have large gaps to address, even as they move toward understanding

The professor of linguistics and expert in large language models discusses their fast-growing capabilities in understanding, their limitations, and where he focuses efforts