N-gram, Language model, Information retrieval, Speech recognition, Natural language processing, Learning

LinkBERT: Improving Language Model Training with Document Link

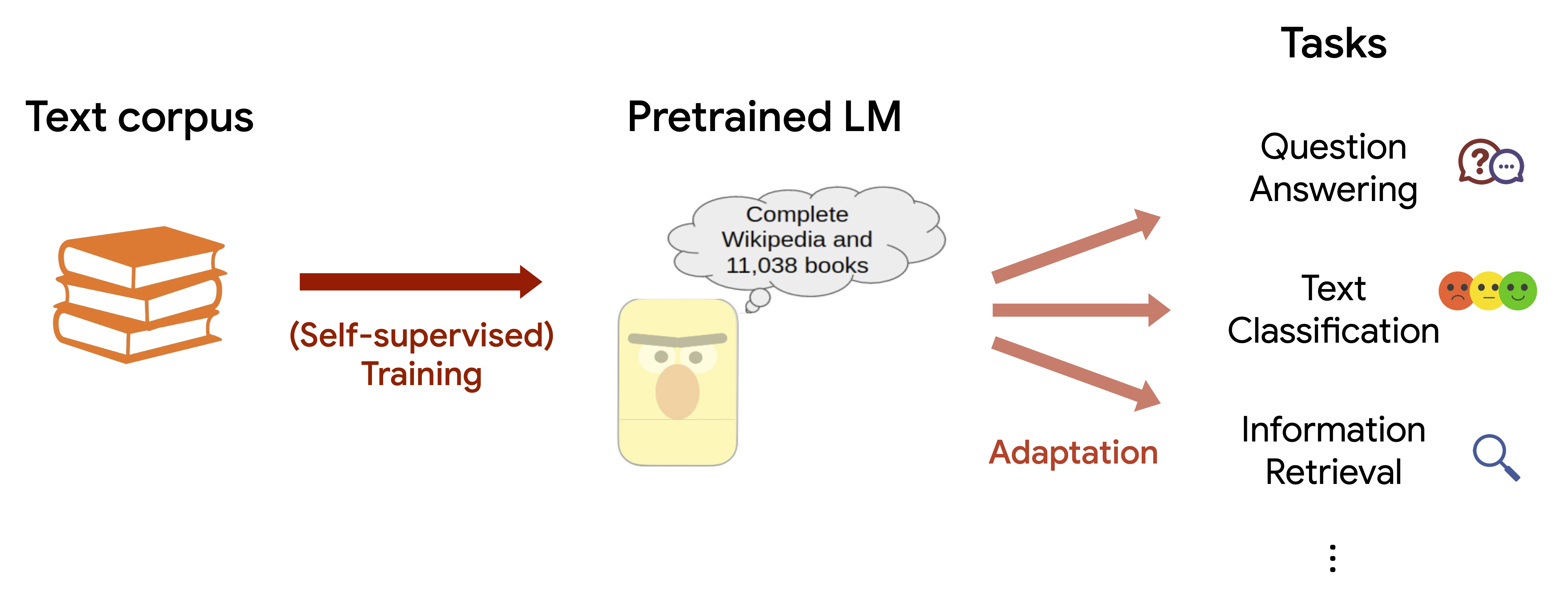

Language Model Pretraining Language models (LMs), like BERT 1 and the GPT series 2, achieve remarkable performance on many natural language processing (NLP) tasks. They are now the foundation of today’s NLP systems. 3 These models serve important roles in products and tools that we use ...

LinkBERT: Improving Language Model Training with Document Link

Language Model Pretraining Language models (LMs), like BERT 1 and the GPT series 2, achieve remarkable performance on many natural language processing (NLP) tasks. They are now the ...

We are sorry, we could not find the related article

If you are curious about Artificial Intelligence and Research

Please click on:

Subscribe to Artificial Intelligence - Research

NLP Research Highlights — Issue #1

Introducing a new dedicated series to highlight the latest interesting natural language processing (NLP) research.

Top NLP Research Papers With Business Applications From ACL 2019

We collect human explanations for commonsense reasoning in the form of natural language sequences and highlighted annotations in a new dataset called Common Sense Explanations (CoS-E). On ...

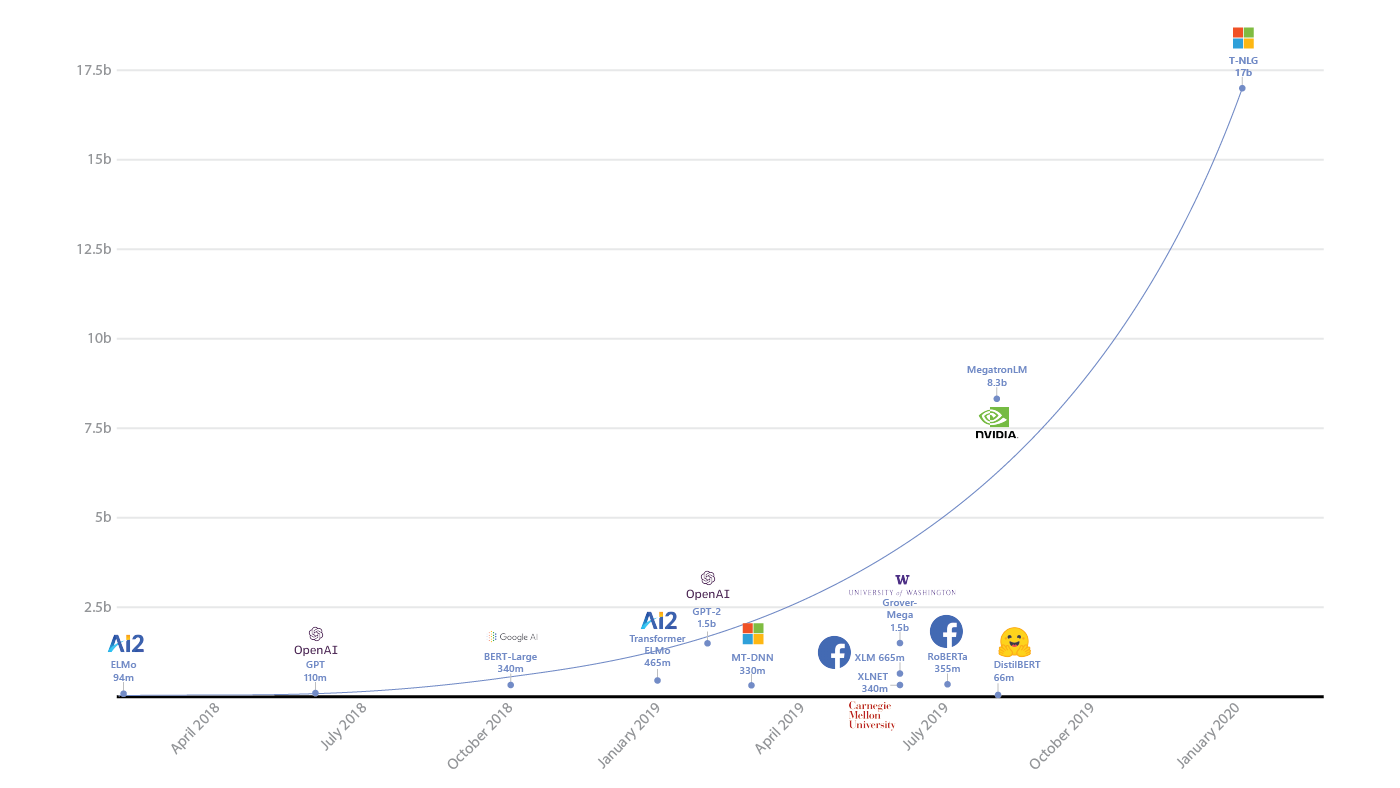

Turing-NLG: A 17-billion-parameter language model by Microsoft

Turing Natural Language Generation (T-NLG) is a 17 billion parameter language model by Microsoft that outperforms the state of the art on many downstream NLP tasks. We present a demo of the ...

Why the new AI/NLP language model GPT-3 is a big deal

Why GPT-3 matters at a high level GPT-3 feels like the first time I used email, the first time I went from a command line text interface to a graphical user interface (GUI), or the first ...

China’s GPT-3? BAAI Introduces Superscale Intelligence Model ‘Wu Dao 1.0’

The Beijing Academy of Artificial Intelligence (BAAI) releases Wu Dao 1.0, China’s first large-scale pretraining model.

NATURAL LANGUAGE PROCESSING (NLP) SYMPOSIUM

Held Virtually – event open to Vector researchers and industry sponsors only The Vector Institute is hosting a Natural Language Processing (NLP) Symposium showcasing the NLP project ...

74 Summaries of Machine Learning and NLP Research

My previous post on summarising 57 research papers turned out to be quite useful for people working in this field, so it is about time…

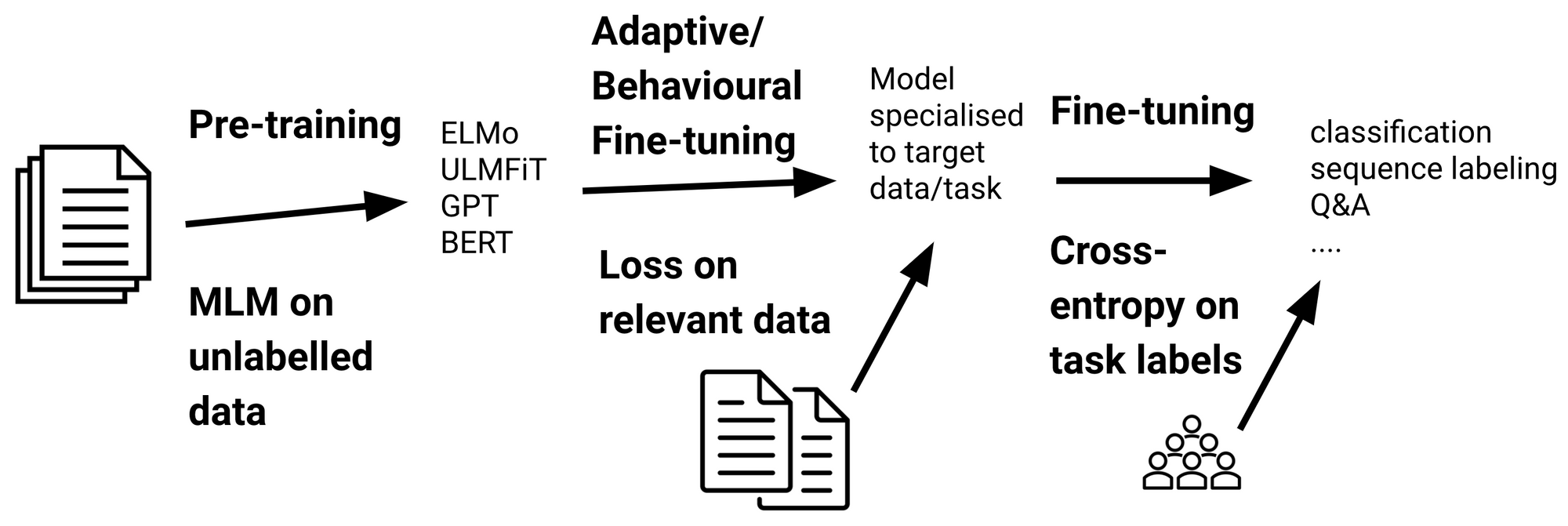

Recent Advances in Language Model Fine-tuning

This article provides an overview of recent methods to fine-tune large pre-trained language models.

Transfer Learning in NLP: A Survey

The limitations of deep learning models, such as requiring a large amount of data to train models and also demand for huge computing…

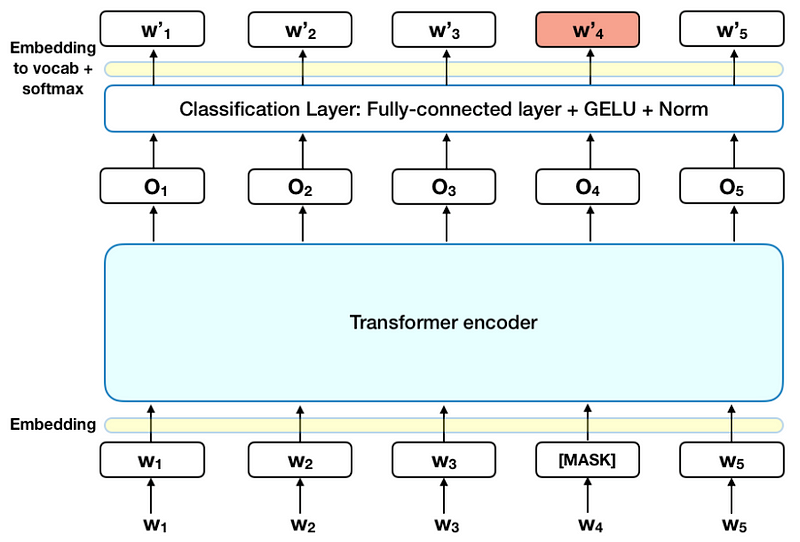

BERT: State of the Art NLP Model, Explained

It has caused a stir in the Machine Learning community by presenting state-of-the-art results in a wide variety of NLP tasks, including Question Answering (SQuAD v1.1), Natural Language ...

Stochastic Parrots: How Natural Language Processing Research Has Gotten Too Big for Our Own Good

This article explains the complexities of language models for readers to grasp their limitations and societal impact.

Google’s BERT changing the NLP Landscape

We write a lot about open problems in Natural Language Processing. We complain a lot when working on NLP projects. We pick on inaccuracies and blatant errors of different models.

Better Language Models and Their Implications

We’ve trained a large-scale unsupervised language model which generates coherent paragraphs of text, achieves state-of-the-art performance on many language modeling benchmarks, and performs ...

Use pre-trained financial language models for transfer learning in Amazon SageMaker JumpStart

Starting today, we’re releasing new tools for multimodal financial analysis within Amazon SageMaker JumpStart. SageMaker JumpStart helps you quickly and easily get started with machine ...

Top Recent NLP Research

Natural language processing (NLP) including conversational AI is arguably one of the most exciting technology fields today. NLP is important because it works to resolve ambiguity in ...

Facebook research being presented at ACL 2019

Researchers from Facebook are presenting a wide range of research at the 2019 meeting of the Association for Computational Linguistics (ACL).

Can Global Semantic Context Improve Neural Language Models?

Apple Machine Learning Journal publishes posts written by Apple engineers about their work using machine learning technologies to help build innovative products for millions of people ...

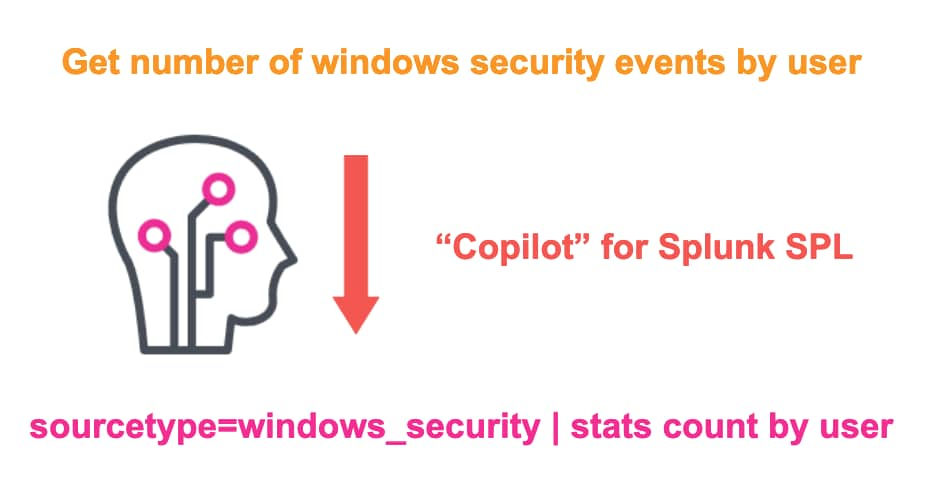

Training a 'Copilot' for Splunk SPL and Increasing Model Throughput by 5x With NVIDIA Morpheus

Get a closer look into our research collaboration with the team at NVIDIA Morpheus – an open application framework for cybersecurity providers – as we set out to build our own 'Copilot' for ...

The major advancements in Deep Learning in 2018

Deep Learning has changed the entire landscape over the past few years and its results are steadily improving. This article presents some of the main advances and accomplishments in Deep ...