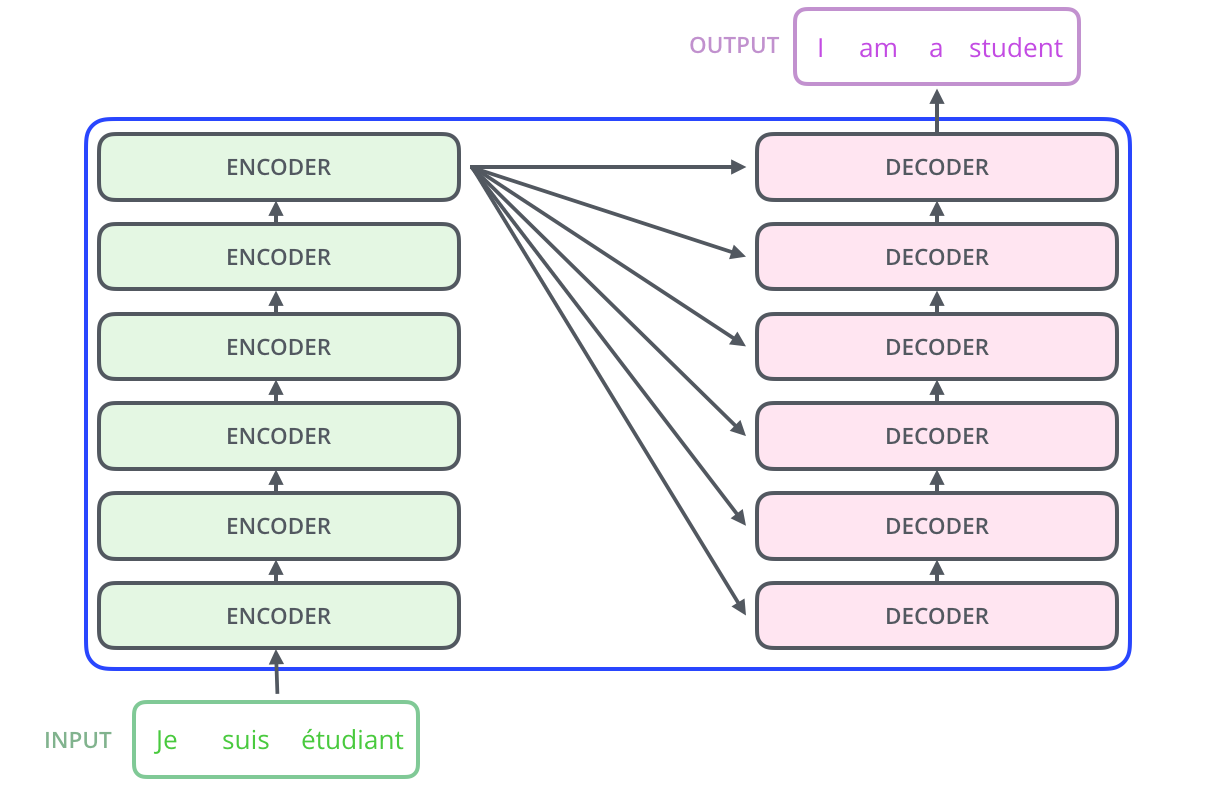

Das Model, Transformer, Input, Output, Neural network, input/Output

The Challenges of using Transformers in ASR

RT @rdesh26: Since 2018, there has been immense interest in using Transformers for ASR. In my new blog post, I look at the various challenges and the solutions people have proposed. #SpeechProc

https://t.co/qoUWwZpSnB

OpenSince mid 2018 and throughout 2019, one of the most important directions of research in speech recognition has been the use of self-attention networks and transformers, as evident from the numerous papers exploring the subject. ...

RT @rdesh26: Since 2018, there has been immense interest in using Transformers for ASR. In my new blog post, I look at the various challenges and the solutions people have proposed. #SpeechProc

https://t.co/qoUWwZpSnB

OpenThe Challenges of using Transformers in ASR

Since mid 2018 and throughout 2019, one of the most important directions of research in speech recognition has been the use of self-attention networks and transformers, as evident from the ...

We are sorry, we could not find the related article

If you are curious about Artificial Intelligence News Essentials and Research

Please click on:

Or signup to our newsletters

The Illustrated Transformer

Discussions: Hacker News (65 points, 4 comments), Reddit r/MachineLearning (29 points, 3 comments) Translations: Chinese (Simplified), Korean Watch: MIT’s Deep Learning State of the Art ...

Generative Modeling with Sparse Transformers

We've developed the Sparse Transformer, a deep neural network which sets new records at predicting what comes next in a sequence—whether text, images, or sound. It uses an algorithmic ...

Found in translation: Building a language translator from scratch with deep learning

Building an English-French language translator neural network from scratch with deep learning.

RL in NMT: The Good, the Bad and the Ugly

Discussing good, bad and ugly practices of reinforcement learning in neural machine translation.

From Exploration to Production — Bridging the Deployment Gap for Deep Learning

This article introduces EMNIST, we develop and train models with PyTorch, translate them with the Open Neural Network eXchange format ONNX and serve them through GraphPipe. We will ...

How to Deploy Real-Time Text-to-Speech Applications on GPUs Using TensorRT

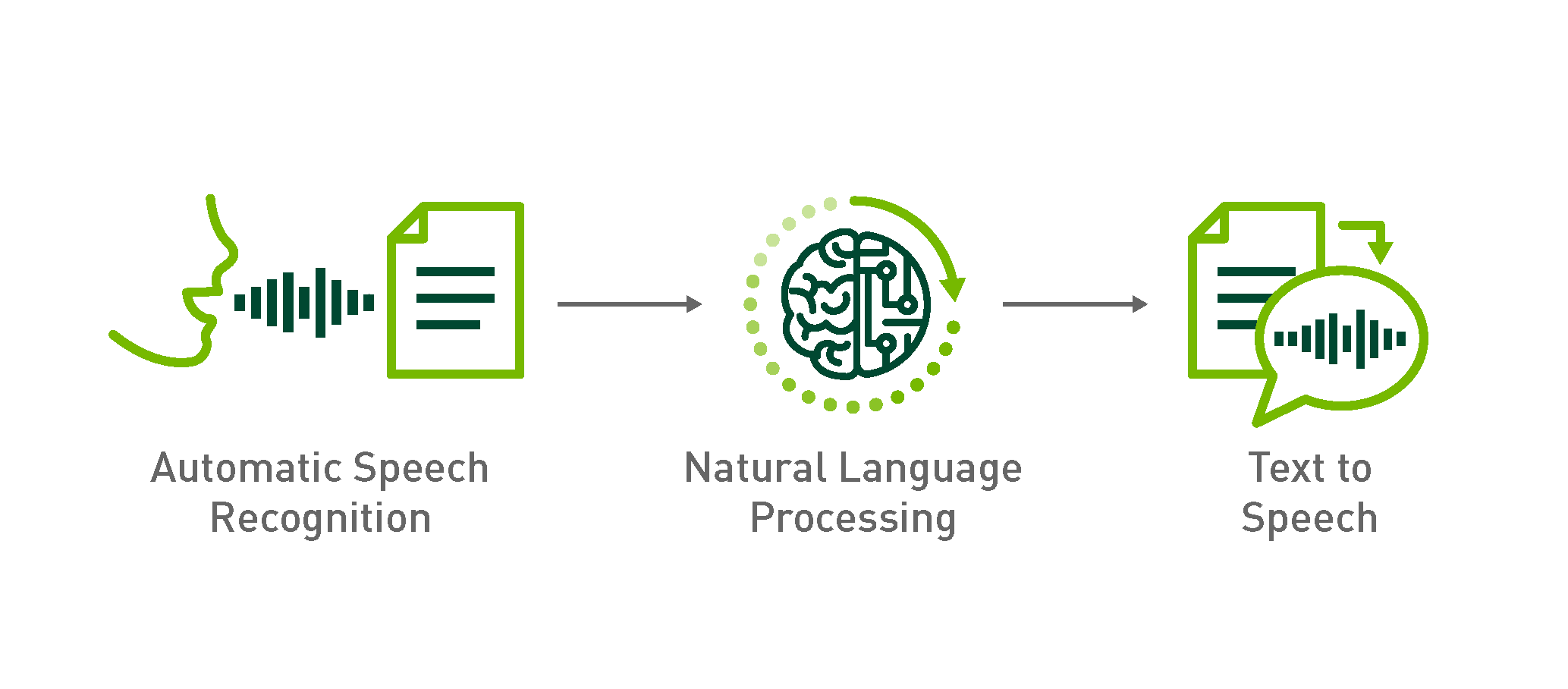

Conversational AI is the technology that allows us to communicate with machines like with other people. With the advent of sophisticated deep learning models, the human-machine ...

A Review of the Recent History of Natural Language Processing

This is the first blog post in a two-part series. The series expands on the Frontiers of Natural Language Processing session organized by Herman Kamper and me at the Deep …